The Journey

How neargrounds went from idea to working app — built entirely with AI tools.

The Idea

I wanted a simple way to find campgrounds worth driving to. Not a booking engine, not a review aggregator — just a curated shortlist based on where I am and how far I'm willing to go. The kind of recommendations you'd get from a friend who's camped everywhere.

The concept: type in your zip code and a distance, toggle a few filters, and get back a handful of campgrounds pulled from real sources — Reddit threads, camping blogs, state park databases. Behind the scenes, an AI reads all of it and gives you the highlights.

The Stack

The app runs on Next.js hosted on AWS Amplify, with a Supabase database for caching and configuration. When you search, the app calls AWS Bedrock (which runs a Claude AI model) to generate campground recommendations. Results get cached so repeated searches are instant and free.

The admin panel lets me edit the AI prompt, manage search access, clear the cache, and monitor usage — all from a browser. No local development environment required.

The entire codebase was generated and debugged through AI chat conversations. I made edits through GitHub's web editor and ran database commands in Supabase's SQL console. No IDE, no terminal on my machine.

The Build

I started by describing what I wanted to Claude Sonnet and it generated a full working codebase — frontend, backend, database schema, API routes, admin dashboard. Structurally solid. The code compiled clean.

Then I tried to deploy it, and that's where the real work began.

The hosting platform thought my app was a static website and returned 404 on every page. The fix was a single platform setting buried in the AWS console that couldn't be changed through the UI — I had to use a command-line tool. The AI walked me through finding it.

After that, a cascade: the Node.js version was too old (rejected post-build, not during build, which was confusing). The AI model wasn't enabled in my AWS account. The model ID format was wrong — a subtle prefix difference between direct access and cross-region access. The AI's responses were too large and timed out the server. The authentication system worked locally but broke on the hosting platform because cookies weren't being forwarded correctly.

Each issue followed the same loop: the AI would tell me to add logging, I'd deploy, read the actual error message in the server logs, and then we'd fix the real problem. That loop — add logging, read the real error, fix the real problem — was the most productive pattern of the entire project.

Partway through deployment debugging, I shifted from Claude Sonnet to Claude Opus. Sonnet was great for generating the initial codebase — fast, clean, well-structured. But when issues started compounding across platform settings, auth patterns, and model configuration, Opus was noticeably better at holding the full context and reasoning through cascading failures. If I were starting over, I'd use Sonnet for the initial build and switch to Opus as soon as deployment gets real.

The Teardown

After getting the first version working, I stepped back and realized the architecture was overkill. The AI had built a full user management system — signup, login, email verification, admin approval, role-based access, budget tracking. For a hobby site with a handful of friends using it, that was a lot of moving parts creating a lot of surface area for bugs.

So I scrapped the entire authentication system and replaced it with a simple shared passkey. One passkey to search, one to access admin. No user accounts, no signup flow, no approval queue. This meant deleting database tables, dropping foreign key constraints, updating security policies, and removing an entire dependency from the project.

The AI generated a complete replacement codebase in one conversation. The rebuild was cleaner and faster than continuing to patch the original.

Design Iteration

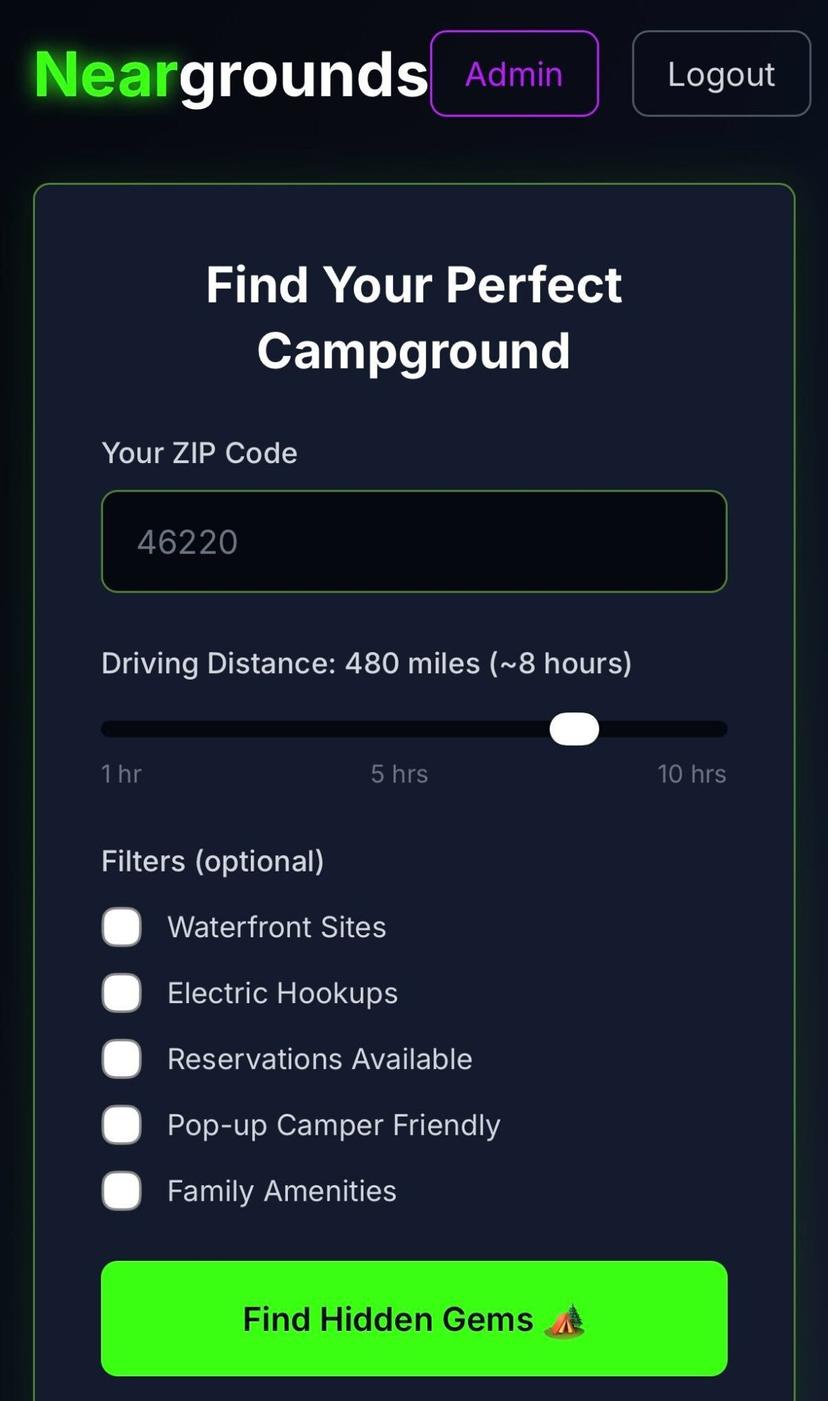

The first version looked like a developer's side project — dark theme, neon green accents, "Find Hidden Gems" with a tent emoji. It worked, but it didn't feel like something I'd want to hand to someone.

v1 — proof of concept

v2 — current design

The redesign started with a color swatch I pulled from an outdoor gear catalog — olive greens, bark browns, muted golds. From there, I iterated on typography (landed on a typewriter font for the field-guide feel), replaced placeholder graphics with hand-drawn camping icons, and refined contrast and hierarchy across multiple rounds of feedback.

Each round was the same: describe what felt off, the AI generates updated code, I deploy and evaluate on my phone. Some things — like making sure filter labels don't wrap to two lines on mobile, or getting the right opacity on background icons so they feel present but not distracting — took several passes.

What I Learned

Start simpler than you think. The AI's first pass included every feature I described and several I didn't ask for. For a hobby project, starting with the minimum viable version and adding complexity later would have saved significant debugging time.

AI-generated code works in isolation but breaks at the seams. The code itself was clean and well-structured. Nearly every issue was at an integration point — a hosting platform setting, a model ID format, a database column type that didn't match what the code was writing. These are the things an AI can't know without seeing the actual deployment.

Full rebuilds can be faster than patches. When I'd accumulated enough friction with the auth system, scrapping it entirely and regenerating the codebase was faster and produced cleaner results than continuing to fix things incrementally.

Server logs are the breakthrough. Every time I was stuck, the answer came from adding a few logging lines and reading what actually happened on the server. The AI couldn't guess what was wrong from a generic error message, but given the real error it could fix it immediately.

You don't need a development environment. This entire project was built through chat interfaces, a web-based code editor, and a database console. No IDE, no local server, no terminal. That's a genuinely new way to build software, and it worked.